Inside the American High School Where Final Exams Are Now Prompt-Only

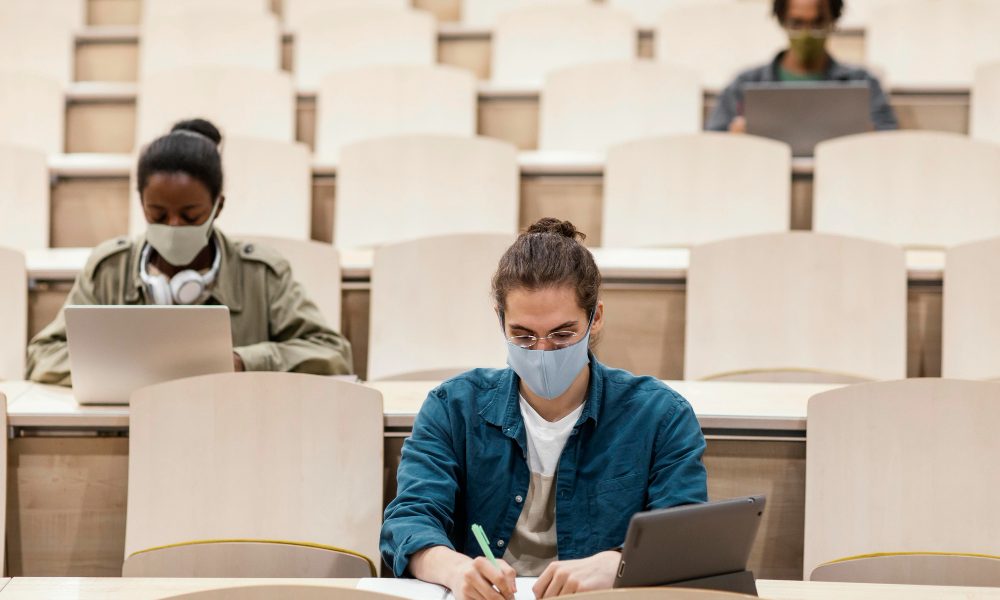

The custom of finals week appears oddly muted on a quiet weekday morning in a public high school outside of Boston. No blue exam booklet piles. There are no educators prowling the aisles like guardians of a national secret. Rather, rows of pupils stare at blank prompt windows while sitting with laptops open. One sentence could contain all of the instructions for their final exam.

“Create the most effective prompt you can.” The American high school final exam followed a well-known format for many years. Handwritten essays, multiple-choice exams, and perhaps a few diagrams scrawled on scratch paper. In the hopes that their preparation would match what was on the exam sheet, students committed facts, formulas, or historical dates to memory. Although it wasn’t flawless, it was predictable.

| Category | Details |

|---|---|

| Topic | AI-based assessment in high schools |

| Location | United States (pilot high school program) |

| Education Level | K–12 Secondary Education |

| System Context | Decentralized U.S. education system |

| Key Idea | Students evaluated through AI prompts rather than traditional exams |

| Age Group | Typically 14–18 years old |

| Reference | https://www.ed.gov |

This school made the decision to try something different. Last year, teachers noticed a pattern that was hard to ignore, so they quietly started the experiment. Submissions of homework were becoming unusually polished. Essays read like expert commentary. Math explanations seemed flawlessly organized, at times in an unsettling way. Artificial intelligence tools have quickly become a part of student life.

One English teacher recounted her realization that the ground had changed. She witnessed a student discreetly paste an entire chapter into an AI chatbot during a discussion of Frederick Douglass’s autobiography and receive a polished paragraph of literary analysis in a matter of seconds. As if everyone had read the same prewritten interpretation, the class discussion that ensued sounded oddly hollow.

She later told coworkers, “It flattened the discussion.” There had to be a change.

The simplicity of the school’s solution was radical. Rather than outlawing AI tools, which many educators believed would fail almost instantly, they chose to integrate them straight into the testing procedure. The AI’s output would not be used to grade students. The prompt itself would be used to grade them.

To put it another way, the question turned into the test. It feels different to watch students navigate this new system than it does in a traditional exam room. The atmosphere has changed to one of experimentation, but there is still tension—finals week always carries a certain nervous energy. Some students try to get better answers from the AI model by typing slowly and repeatedly editing prompts. Before writing anything at all, others take a seat with their arms crossed and ponder.

A junior shrugged as he explained the procedure. He remarked, “It’s like arguing with the computer.”

Instructors maintain that the approach assesses more than just memorization. Creating a good prompt necessitates having a thorough understanding of the topic in order to direct the AI toward helpful responses. The chatbot’s response quickly becomes ambiguous or deceptive if a student does not understand the fundamental idea.

The learning process now includes that feedback loop. However, there are those who disagree with the experiment.

Some educators contend that using AI tools during tests makes it harder to distinguish between machine assistance and student knowledge. After all, the purpose of traditional final exams was to demonstrate a student’s ability to remember and apply knowledge on their own. For some educators, integrating AI into the testing environment is tantamount to surrender.

It’s easy to feel that tension as you walk through the hallway outside the testing rooms.

Academic integrity posters are still displayed on the walls. Thick textbooks that once defined the curriculum are still on the library shelves. However, students are using technology in the classroom that can quickly summarize entire chapters.

The change feels both academic and cultural. There isn’t a single national education system in the US, and schools frequently try out various teaching strategies. This adaptability has led to the creation of project-based learning initiatives and charter schools. However, incorporating AI into the fundamental framework of tests seems like a more significant shift.

Some educators are concerned that it might promote mental short cuts. Others hold the opposite view.

During a staff meeting, one science instructor put it plainly. He stated, “Students already have AI in their pockets.” “They don’t learn anything from pretending otherwise.”

He contends that teaching students how to use technology responsibly should be the main goal of education. They are forced to think critically about what they want to learn when specific prompts are written. It also shows how easily, depending on the wording, an AI system can be led to either deeper analysis or superficial responses.

During finals week, that lesson becomes surprisingly apparent as you watch students test various prompts.

An answer to a vague question is generic. A well-made one yields something much more intriguing.

Additionally, a minor psychological change is occurring. Recall—remembering definitions, dates, and equations—was frequently rewarded in traditional exams. Exams that are prompt-based reward curiosity. Pupils who ask more insightful questions typically get more insightful answers.

There is a philosophical quality to that dynamic. The notion that knowledge is passed down from teacher to pupil has been the foundation of education for centuries. A third person is now present in the room as technology interjects itself into that conversation. The process is guided by teachers. Students investigate it. The AI silently sits in the center, answering any query that comes up on the screen.

As this develops, there’s an odd mix of hope and trepidation. Prompt-based tests might continue to be a specialized experiment and an interesting anecdote in the history of educational reform. Many innovations have been tried in schools in the past, but not all of them have been successful. However, there’s also a feeling that something basic has changed.

Pupils are no longer merely taking in knowledge. They are learning how to question it.

And that ability—the ability to ask the right question—may prove to be the most difficult test of all in a world where answers can appear instantly on a screen.

Bitcoin

Bitcoin  Ethereum

Ethereum  Tether

Tether  XRP

XRP  USDC

USDC  Solana

Solana  TRON

TRON  Lido Staked Ether

Lido Staked Ether  Cardano

Cardano  Avalanche

Avalanche  Toncoin

Toncoin